In the video space, there’s always a lot of talk about these number ratios – 4:4:4, or 4:2:2, or 4:1:1, but what exactly do they mean? Recently, someone argued with me that it was better to convert every video clip from my Canon Rebel T2i DSLR camera into a 4:4:4 intermediate codec before editing; that this would make the color magically “better” and that editing natively was somehow bad. They were wrong, and I’m going to explain why.

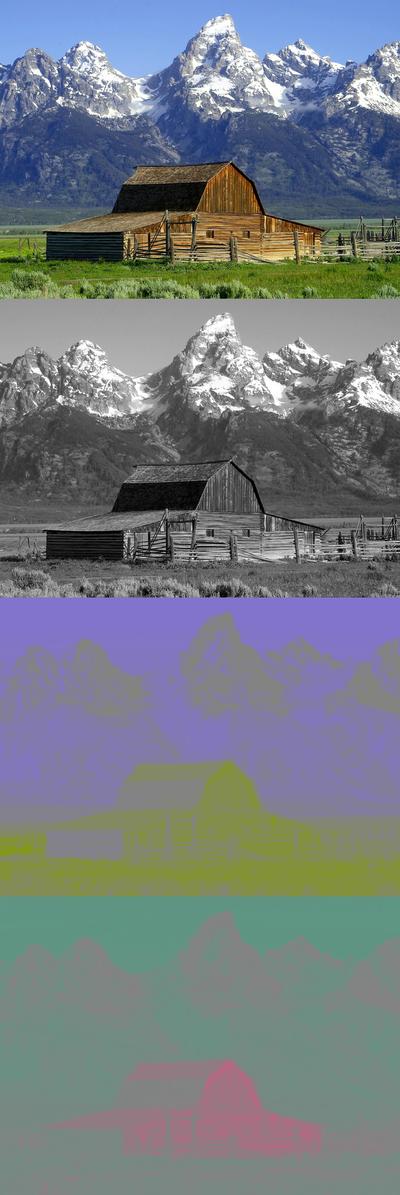

Before you read on, make sure you’ve read my earlier articles on 32-bit floating point and on YUV color, and look at the picture from the Wikimedia Commons site of the barn in YUV breakdown.

In the picture of the barn, try to look at the fine detail in the U and V channels.Typically, without any brightness information, it’s hard to see any detail in the color channels. The naked eye just does a much better job distinguishing brightness than color. This fact holds true for moving pictures. If the video uses YUV color space, the most important data is in the Y channel. You can throw away a lot of the color information, and the average viewer can’t tell that it’s gone.

One trick that video engineers have used for years is to toss away a lot of the color information. Basically, they can toss away the color values on every other pixel, and it’s not very noticeable. In some cases, they throw away even more color information. This is called Color Subsampling, and it’s a big part of a lot of modern HD formats for video.

When looking at color subsampling, you use a ratio to express what the color subsampling is. Most of us are familiar with these numbers: 4:4:4, or 4:2:2, or 4:1:1, and most of us are aware that bigger numbers are better. Fewer people understand what the numbers actually mean. It’s actually pretty easy.

Let’s pretend that we are looking at a small part of a frame – just a 4×4 matrix of pixels in an image:![]()

In this example, every pixel has a Y value, a Pb value, and a Pr value. If you look at a line of pixels, and count how many Y, U, and V values, you’d say that there are 4 values of Y, 4 values for U, and 4 values of V. In color shorthand, we’d say that this is a 4:4:4 image.

4:4:4 color is a platinum standard for color, and it’s extremely rare to see a recording device or camera that outputs 4:4:4 color. Since the human eye doesn’t really notice when color is removed, most of the higher-end devices output something called 4:2:2. Here’s what that 4×4 matrix would look like for 4:2:2:![]()

As you can see, half of the pixels are missing the color data. Looking at that 4×4 grid, 4:2:2 color may not look that good, but 4:2:2 color is actually considered a very good color standard. Most computer software can use the neighboring color values and average in the values of the missing color values.

Let’s look at 4:1:1 color, which is used for NTSC DV video:![]()

Bleaccch. 75% of the color for each pixel is tossed away! With bigger “gaps” between color information, it’s even harder for software to “rebuild” the missing values, but it happens. This is one of the reasons that re-compressing DV can cause color smearing from generation to generation.

Let’s look at one other color subsampling, which is called 4:2:0, and is used very frequently in MPEG encoding schemes:![]()

This diagram shows one of many ways that 4:2:0 color subsampling can be accomplished, but the general idea is the same – Luma samples for each pixel, one line has Pb samples for every other pixel, and the next line has Pr samples for every other pixel.

With a color subsampled image, it’s up to the program decoding the picture to estimate the missing pixel values, using the surrounding intact color values, and providing smoothing between the averaged values.

Okay – we’ve defined what color subsampling is. Now, how does that relate to my friend’s earlier argument?

Well, in my DSLR camera, the color information is subsampled to 4:2:0 color space in the camera. In other words, the camera is throwing away the color information. It’s the weakest link in the chain! Converting from 4:2:0 to 4:4:4 at this stage doesn’t “magically” bring back the thrown-away data – the data was lost prior to hitting the memory card. It’s just taking the data that’s already there, and “upsampling” the missing color values by averaging between the adjoining values.

Inside Premiere Pro, the images will stay exactly as they were recorded in-camera for cuts-only edits. If there’s no color work going on, the 4:2:0 values remain untouched. If I need to do some color grading, Premiere Pro will, on-the-fly, upsample the footage to 4:4:4, and it does this very well, and in a lot of cases, in real-time.

Going to a 4:4:4 intermediate codec does have some benefits – in the transcode process, upsampling every frame to 4:4:4 means that your CPU doesn’t have as much work to do, and may give you better performance on older systems, but there’s a huge time penalty in transcoding. And, it doesn’t get you any “better color” than going native. Whether you upsample prior to editing or do it on-the-fly in Premiere Pro, the color info was already lost in the camera.

In fact, I could argue that Premiere Pro is the better solution for certain types of editing because we leave the color samples alone when possible. If the edit is re-encoded to a 4:2:0 format, Premiere Pro can use the original color samples and pass those along to the encoder in certain circumstances. Upsampling and downsampling can cause errors, since the encoder can’t tell the difference between the original color samples and the rebuilt, averaged ones.

I’m not trying to knock intermediate codecs – there are some very valid reasons why certain people need them in their pipeline. But, for people just editing in the Adobe Production Premium suite, they won’t magically add more color data, and may waste you a lot of time. Take advantage of the native editing in Premiere Pro CS5, and you’ll like what you see.

Filmtools

Filmmakers go-to destination for pre-production, production & post production equipment!

Shop Now